How’s that for an inspired title? In this article we’ll be exploring various topics related to BCS in SPS 2010. We’ll be discussing:

- Types of external content types,

- The Bigint problem,

- Inspecting the XML of the BDC Metadata Model,

- BCS terminology,

- Creating a custom .NET assembly connector,

- Debugging an ECT built on the custom .NET assembly connector,

- Creating a data access layer using the Entity Framework 4,

- Consuming (and building) a WCF 4 service,

- Deployment,

- Get IntelliSense support for BDC models,

- Standard search support,

- Creating a profile page,

- Consuming a SQL Server table directly,

- ECT Associations,

- Secure Store support.

If you are suspecting, judging from the list of topics, that this is going to be a long article, you’d be absolutely right.

Different types of External content types

Creating a BCS external content type and connecting to a SQL Server database using SharePoint Designer isn’t particularly hard to do. If we were living in a video game, this would be level 1 of the game. Which game you ask? Why, Donkey Kong of course!

Besides creating a BCS ECT for SQL Server, you also have the option to create ECT’s for .NET assemblies and .asmx or WCF services. In this article, you will see all of the three types in action.

The Bigint scenario

There is one important thing to note though. When it comes to SQL Server, we absolutely love to use primary keys of the Bigint datatype. Unfortunately, in BCS they get converted to System.Int64 and this data type is not supported in external lists. We’ve seen lots of places where people advise to avoid using this datatype in BCS scenarios, but how can you if you have to work with an existing database? If you happen to be working on one of our legacy applications, you’re bound to meet this problem!

Now, the max value of a Bigint is 9,223,372,036,854,775,807, and the max value of System.Int32 is 2,147,483,647, so if the max id of your table falls well within the System.Int32 range, the Bigint scenario is a valid scenario where you need to build a .NET assembly connector or a WCF service wrapper to circumvent this problem and handle the conversion of Bigints to System.Int32 and vice versa.

About the custom .NET assembly connector

BCS also supports the creation of connectors via Visual Studio.NET 2010. Such connectors potentially harness more power, but in video game terminology, they are a couple levels up.

There are two kinds of connectors:

- .NET Assembly Connectors, they connect to a specific system (say, your customers database).

- Custom Connectors, they connect to a generic type system (say, SQL Server).

If you’re not a 3rd party vendor planning to build custom connectors (a free tip: don’t build one for SQL Server, a large company beat you to it), you probably belong to a group that is far more likely to build .NET Assembly Connectors. We’re also members of that group, so please come and join us.

Creating a Hello World .NET assembly connector

If we were attending a party, this section discusses to say hi to the host, but doesn’t mention that you might want to bring a gift too. In this section, we’ll be creating a .NET assembly connector that is as easy as possible and use it to build an external list that allows read operations only. We’ll be using an imaginary SQL Server table that contains an integer called ProductID and a Name that is a string. The ProductID is the key that uniquely identifies products.

Please note: Don’t create that table yet, we don’t need it in this section. We’ll create it later.

Create a start project

First, we’ll be creating a new .NET assembly connector in VS.NET:

- Start Visual Studio 2010.

- Create a New Project.

- In the Installed Templates section, under the SharePoint > 2010 node, choose Business Data Connectivity Model.

- We’ll name the project: LoisAndClarkConnectors.

- Click OK.

- Choose a SharePoint site and accept the only option available: Deploy as a farm solution.

- Click Finish. You should note the presence of a new folder called BdcModel1.

- Select BdcModel1, and, using the Properties window, change the folder name to ProductModel.

- The focus should still be on the ProductModel node. You should see an entity called Entity1 on the Entity Design Surface. You don’t need it, delete it.

- If you expand the ProductModel node, you’ll notice there are a couple of items: BdcModel1.bdcm, Entity1.cs, and Entity1Service.cs.

- Delete Entity1.cs and Entity1Service.cs.

- Rename BdcModel1.bdcm to ProductModel.bdcm using it’s File Name property.

Being superstitious, we’ll stop at step 12. Now, we’re at a place where we’ve got everything cleaned up nicely and we’ve created ourselves a fine place to start.

Please note: The part about being superstitious is actually not true, we’re planning to create steps 13 like there’s no tomorrow in the rest of the article. It was just that we were out of steps to add to the list.

Inspecting the xml of the BDC Metadata model

At this point, it’s good to understand that the BDC Metadata Model (the .bdcm file), is actually an XML file. If you want to inspect the XML, do the following:

- Right-click the ProductModel.bdcm file and choose Open With.

- Choose Yes if a dialog appears asking you if you want to save your changes.

- Choose XML (Text) Editor in the Open With – ProductModel.bdcm dialog.

- Click OK.

- Choose Yes in the dialog that asks if you want to close the bdcm file.

Now, you’re looking at the XML of the BDCM file. Not as easy as pressing F7, but ok. If you want to see the Design Surface again, double click the bdcm file and choose Yes to close the XML view of the BDCM file.

BCS terminology prerequisites

In the next part, we’ll be creating a Product entity that supports two types of operations: read the entire product list, and return a single product item. In BCS terminology, these types of operations are called stereotypes or stereotyped operations.

Stereotypes predefine operations types that can be defined against the external system. In other words, those are the operation types that can be used and have meaning within BCS. The most basic stereotypes in BCS are: Read Item, Read List, Create, Update, and Delete. In addition, there are more stereotypes available (15 or so). Of course, the “reading the entire product list” and “returning a single product item” operations correspond to the BCS stereotypes Read List and Read Item.

It’s important to keep in mind that if you want to support an operation in BCS you need to create both a Method and a MethodInstance element in the BDC Metadata model.

The Method element defines what the operation looks like in terms of defining input parameters, output parameters, and filters. The following BDCM fragment describes a method called ReadList that returns a collection of products and accepts no input parameters:

<Methods>

<Method Name=”ReadList”>

<Parameters>

<Parameter Name=”productList” Direction=”Return”>

<TypeDescriptor Name=”ProductList” TypeName=”System.Collections.Generic.IEnumerable`1[System.String]” IsCollection=”true”>

<TypeDescriptors>

<TypeDescriptor Name=”Product” TypeName=”System.String” /></TypeDescriptors></TypeDescriptor></Parameter>

</Parameters>

… Method instance info omitted for clarity.

</Methods>

A MethodInstance element defines which stereotype is implemented. The following BDCM fragment describes a method instance called ReadListInstance that is a Finder BCS stereotype (by default, this method will be named ReadList, but we find this confusing).

<Methods>

<Method Name=”ReadList”>

<Parameters>

<Parameter Name=”productList” Direction=”Return”>

<TypeDescriptor Name=”ProductList” TypeName=”System.Collections.Generic.IEnumerable`1[System.String]” IsCollection=”true”>

<TypeDescriptors>

<TypeDescriptor Name=”Product” TypeName=”System.String” /></TypeDescriptors></TypeDescriptor></Parameter>

</Parameters>

<MethodInstances>

<MethodInstance Name=”ReadListInstance” Type=”Finder” ReturnParameterName=”productList” ReturnTypeDescriptorPath=”ProductList” />

</MethodInstances></Method>

</Methods>

A method is like a prototype of the operation and can contain multiple method instances, although typically developers implement a 1-1 relationship to keep things no more complicated than necessary. A method instance is like the implementation of the method.

Creating a Product entity

On with the creation of the Product entity… First, we’ll only add a Product entity to the Design surface, and define a unique identifier for it:

- From the Toolbox, drag an Entity to the Design Surface.

- Using it’s Name property, call it Product.

- Right-click the Product entity and choose Add > Identifier.

- Call the identifier ProductID,

- In the Properties window, set the Type Name to System.Int32.

- Set the Identifier Entity to Product and set the Identifier property to ProductID.

- Set the Read-only property to True. This is necessary because users should not be able to change the unique id.

Mapping the Product entity to a DTO class

The Product entity needs to be mapped to a .NET class (the DTO class or POCO) containing properties that correspond to the entity. First, we’ll have to create the .NET class:

- Right-click the ProductModel folder and choose Add > Class.

- Call the class Product.cs and click Add.

- Add the following code:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

namespace LoisAndClarkConnectors.ProductModel

{

public class Product

{

public int ProductId { get; set; }

public string Name { get; set; }

}

}

At this point you should take note of two things:

- Since it’s a simple example, we’ll only be adding a Name property, and of course, a unique identifier. If the unique identifier is missing, you won’t be able to create an external content type for it. So, this is about the minimum amount of code we need to get by.

- The other thing to make a strong note of is the fact that we’ve called the property ProductId. In the Product entity on the design surface we’ve called the unique identifier ProductID, spelled with different casing. This is absolutely on purpose, but will lead to errors later on. Because we feel this mistake is so easy to make and the Visual Studio tooling doesn’t check for this, we want to show what happens and how you can detect this mistake and correct it.

Next, we need to create a method that returns all products. Or, to put it in BCS terms, we need to implement the Finder stereotype. To do this, we need to describe it in a BCS method that also defines it parameters. We’ll also define a BCS method instance, that refers to the Finder stereotype.

- Double-click ProductModel.bdcm.

- At the bottom window, click BDC Method Details.

- In the Methods section, click Add a Method and choose Create a Finder Method.

- Change the name (thru the Property window) to ReadProducts.

- Notice that VS.NET created a return parameters list called productList. Rename it to ProductList.

- The TypeDescriptor of the ReadProducts list is not ok, click it and choose Edit.

- In the Properties window, choose Type Name.

- Click the Current Project tab.

- Click Project (referring to the Project.cs you created earlier).

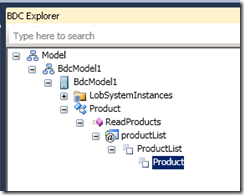

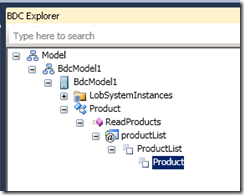

Right now you’ve created a BCS method that returns a collection of Product instances, and that’s exactly what we want. Now it’s time to switch to the BDC Explorer, and finish the description of the various types we need in the ReadProducts method. The BDC Explorer should look like this:

Up until this point VS.NET has inferred that the productList return parameter (@parameter) returns a collection of Product objects. Strangely, it doesn’t know the type of a Product and thinks it’s a System.String. We’ll need to correct that:

- Click Type Name in the Properties window.

- Click Current Project.

- Click Product.

Although now the BDCM model knows that a ProductList contains a collection of Product objects AND knows that a Product refers to the Product class, it still needs more information. It also doesn’t know anything about the members of the Product class, so we’ll need to add them too.

- Right-click the Product node in BDC Explorer and choose Add Type Descriptor.

- Give it the following name: ProductID.

- Give it the following type name: System.Int32.

- Add another type descriptor to the Product node.

- Give it the name: Name.

- The default type, System.String, is good.

At this point, we’ve created in enough detail what the BCS method looks like. Now, there’s still the job left of defining the BCS method instance.

- In the BDC Method Details pane, select the ReadProducts method instance.

- Since it’s too easy to confuse with the BCS ReadProducts method, change it’s name property to ReadProductsInstance.

Adding a specific finder

Before we’re able to create an external list, we need not only a Finder method, but also a SpecificFinder method that’s able to return the specifics of any given product. We’ll create one in this section.

- In the BDC Method Details pane, click Add a Method.

- Select Create Specific Finder Method.

- It’s called ReadItem but let’s change it’s name to ReadProduct.

- Change the parameter names to Product and ProductID.

- For the Product parameter, click the Type Descriptor of ProductID and set the Identfier Entity to Product. Set the Identifier property to ProductID.

- Set theead-only property to True.

- Change the name of the method instance (also called ReadProduct) to ReadProductInstance.

Note that it already has an In parameter allowing BCS to pass in a product id parameter. It also has a return parameter that gives back a Product object. Nothing else needs to be done here at this point.

Switch back to the Solution Explorer and double-click ProductService.cs. As you can see, there are two static methods waiting for you to implement them. Since we’re only creating a Hello World example we’ll do this in the simplest way possible:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

namespace LoisAndClarkConnectors.ProductModel

{

public partial class ProductService

{

public static IEnumerable<Product> ReadProducts()

{

return new List<Product>()

{

new Product() { ProductId = 1, Name = “Blue” },

new Product() { ProductId = 2, Name = “Red” },

};

}

public static Product ReadProduct(int productID)

{

return new Product() { ProductId = 1, Name = “Blue” };

}

}

}

Debugging the ECT

Remember that we misspelled ProductId on purpose in Product.cs, and that it should be ProductID. We’ve found that SPD is a great tool for finding such mistakes at an early stage (unfortunately, there’s no tool for checking at design time).

Let’s see what happens if we press F5, start the project, and deploy the external content type we’ve created.

- The SharePoint site opens.

- Choose Site Actions > Edit in SharePoint Designer.

- Click External Content Types.

- Click the Product ECT.

- Double-click ReadProductInstance.

- Choose Yes in the dialog asking you to create a connection and edit the operation.

The Read Item window opens and notifies you there is an error.

- Click Next two times.

- Here you see that the identifier ProductID is not mapped to a data source element. You’ll also notice that the data source element ProductId is not checked.

We’ll fix the problem at the source later. For now, just click Cancel. Let’s go ahead and create the external list anyway.

- In SPD, click Lists and libraries.

- In the ribbon, click External List.In the External Content Types Picker, double-click Product.

- Call the list ProductList and click OK.

Regardless if the permissions you may have in SharePoint, you still need to set explicit permissions for the ECT.

- Open SCA.

- Click Application Management.

- In the Service Applications section, click Manage service applications.

- Choose Business Data Connectivity Service.

- From the Product action (ECB) menu, choose Set Permissions.

- Add your account and give all permissions, then click OK.

Go back to the original SharePoint site that should now contain the ProductList list.

Click it. You’ll see the message:

Unable to display this Web Part. To troubleshoot the problem, open this Web page in a Microsoft SharePoint Foundation-compatible HTML editor such as Microsoft SharePoint Designer. If the problem persists, contact your Web server administrator, and a correlation id.

Copy the correlation id and paste it in SPLIT (the SharePoint Log Investigation Tool, it can be downloaded from CodePlex). Near the bottom of the list of related messages, you’ll find a message stating that a property with the name of ProductID can’t be found on the parent object. That’s another hint that you’ve misspelled something.

Now, go back to VS.NET and stop debugging. Correct the situation and rename the property in Product.cs to ProductID. Do the same in ProductService.cs and see if the project compiles. Then, press F5 again. If you want, check ReadProductInstance in SPD. We feel this is the best way to check if everything works, and the best way to get a good description of the problems.

Please note: If you’re getting an access denied message, you need to set the permissions in SCA for this ECT again, as described earlier in this article.

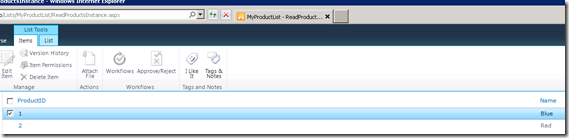

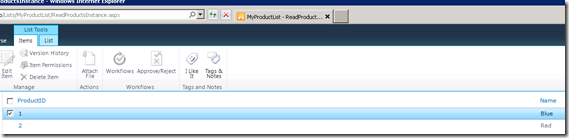

If everything is fine and dandy, you’ll also be able to look at the ProductList external list.

Please note: Debugging is quite easy. Just add break points to the ProductService.cs class.

Show me the money!

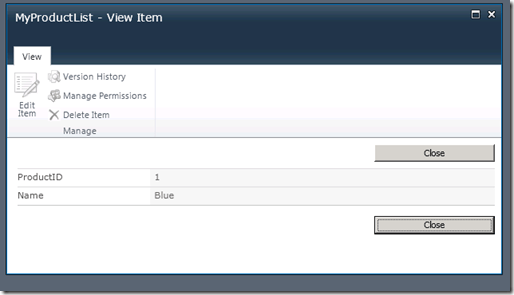

Let’s inspect the results. The product overview looks like this (the call to the ReadProducts() method):

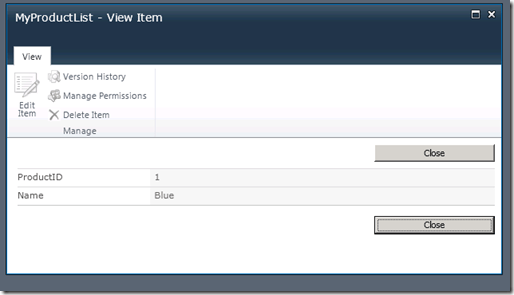

The product detail view (the call to the ReadProduct() method) looks like this:

At this point, the XML for the BDCM model looks like this (if you still have problems, you may want to edit the BDCM file in XML mode and compare what’s different):

<?xml version=”1.0″ encoding=”utf-8″?>

<Model xmlns:xsi=”http://www.w3.org/2001/XMLSchema-instance” xmlns:xsd=”http://www.w3.org/2001/XMLSchema” xmlns=”http://schemas.microsoft.com/windows/2007/BusinessDataCatalog” Name=”BdcModel1″>

<LobSystems>

<LobSystem Name=”BdcModel1″ Type=”DotNetAssembly”>

<LobSystemInstances>

<LobSystemInstance Name=”BdcModel1″ />

</LobSystemInstances>

<Entities>

<Entity Name=”Product” Namespace=”LoisAndClarkConnectors.ProductModel” Version=”1.0.0.35″>

<Properties>

<Property Name=”Class” Type=”System.String”>LoisAndClarkConnectors.ProductModel.ProductService, BdcModel1</Property>

</Properties>

<Identifiers>

<Identifier Name=”ProductID” TypeName=”System.Int32″ />

</Identifiers>

<Methods>

<Method Name=”ReadProducts”>

<Parameters>

<Parameter Name=”ProductList” Direction=”Return”>

<TypeDescriptor Name=”ProductList” TypeName=”System.Collections.Generic.IEnumerable`1[[LoisAndClarkConnectors.ProductModel.Product, BdcModel1]]” IsCollection=”true”>

<TypeDescriptors>

<TypeDescriptor Name=”Product” TypeName=”LoisAndClarkConnectors.ProductModel.Product, BdcModel1″ IsCollection=”false”>

<TypeDescriptors>

<TypeDescriptor Name=”ProductID” TypeName=”System.Int32″ IsCollection=”false” ReadOnly=”true” IdentifierEntityName=”Product” IdentifierEntityNamespace=”LoisAndClarkConnectors.ProductModel” IdentifierName=”ProductID” />

<TypeDescriptor Name=”Name” TypeName=”System.String” /></TypeDescriptors></TypeDescriptor></TypeDescriptors>

</TypeDescriptor></Parameter>

</Parameters>

<MethodInstances>

<MethodInstance Name=”ReadProductsInstance” Type=”Finder” ReturnParameterName=”ProductList” ReturnTypeDescriptorPath=”ProductList” />

</MethodInstances></Method>

<Method Name=”ReadProduct”>

<Parameters>

<Parameter Name=”Product” Direction=”Return”>

<TypeDescriptor Name=”Product” IsCollection=”false” TypeName=”LoisAndClarkConnectors.ProductModel.Product, BdcModel1″>

<TypeDescriptors>

<TypeDescriptor Name=”ProductID” IsCollection=”false” TypeName=”System.Int32″ IdentifierEntityName=”Product” IdentifierEntityNamespace=”LoisAndClarkConnectors.ProductModel” IdentifierName=”ProductID” ReadOnly=”true” />

<TypeDescriptor Name=”Name” TypeName=”System.String” /></TypeDescriptors></TypeDescriptor></Parameter>

<Parameter Name=”ProductID” Direction=”In”>

<TypeDescriptor Name=”ProductID” TypeName=”System.Int32″ IdentifierEntityName=”Product” IdentifierEntityNamespace=”LoisAndClarkConnectors.ProductModel” IdentifierName=”ProductID” /></Parameter>

</Parameters>

<MethodInstances>

<MethodInstance Name=”ReadProductInstance” Type=”SpecificFinder” ReturnParameterName=”Product” ReturnTypeDescriptorPath=”Product” />

</MethodInstances></Method>

</Methods>

</Entity>

</Entities>

</LobSystem>

</LobSystems>

</Model>

So, reaching the end of this section, did we earn enough points to take a peek at another golden oldie game? We sure did! How about “Raid over Moscow”?

Building an entity framework wrapper

Of course, this was a simple hello world example. We need to take this example to the next level, and write the data access logic to make a real impact on the database. That way, we can come to a point were we have a full-fledged example of a BCS ECT working against a single table. We’ve chosen to use Entity Framework to accomplish this.

Start with creating a new database called TestBCS and execute the following SQL script to create the Product table we’re using for testing purposes:

USE [TestBCS]

GO

/****** Object: Table [dbo].[Product] Script Date: 2/7/2012 11:44:14 AM ******/

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

CREATE TABLE [dbo].[Product](

[ProductId] [int] IDENTITY(1,1) NOT NULL,

[Name] [nchar](50) NULL,

CONSTRAINT [PK_Product] PRIMARY KEY CLUSTERED

(

[ProductId] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

We’re not really looking forward to creating a solution with the first version of the Entity Framework and the problems associated with that older version. So, we’ll build a data access layer using v2, Entity Framework 4. This does have a significant consequence though: since SPS 2010 doesn’t support .NET 4, we’ll eventually have to build a WCF service around it as a wrapper and call that from the BCS ECT. Just as well, the solution seems cleaner that way and since we haven’t demonstrated how to build an ECT against a WCF service, it’ll add considerable flavor to this article as well.

Let’s not worry about that right now. First, we’ll just concentrate on creating the data access layer using the Entity framework.

- Start a new VS.NET project.

- Make a Class Library.

- Call it Product.Data.

- Click OK.

- Right-click the project and type the following default namespace: LoisAndClark.Product.Product.Data.

- Delete Class1.cs.

- Add a new ADO.NET Entity Data Model item and just call it Model.edmx.

- On the Entity Data Model Wizard dialog, choose Generate from database.

- Click Next.

- Click New Connection and point to the TestBCS database.

- Click Next.

- Select the Product table.

- Uncheck the Pluralize or singularize generated object names.

- Click Finish.

- Add a new project, a Console application, to the Product.Data solution. We’ll call it the ProductTester.

- Right-click it and choose Set as Startup project.

- Add a Project reference to Product.Data.

- Add a reference to System.Data.Entity.

In Program.cs, add the following using statement: using LoisAndClark.Product.Data;.

- Add a new item of template type: Application Configuration File.

- Add the following <connectionStrings> section to it (make a copy from this section from Product.Data):

<connectionStrings>

<add name=”TestBCSEntities” connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

<add name=”TestBCSEntities1″ connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

</connectionStrings>

We’ve included the source code of our main class. If you look carefully, it contains all the building blocks required for creating the basic set of BCS stereotypes (find, find all, create, update, delete).

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using LoisAndClark.Product.Data;

namespace ProductTester

{

class Program

{

static void Main(string[] args)

{

Add();

Update();

Delete();

}

private static void Add()

{

using (var ctx = new TestBCSEntities1())

{

ctx.Product.AddObject(new Product()

{

Name = “Test”

});

ctx.SaveChanges();

}

}

private static int GetId(string name)

{

int productId;

using (var ctx = new TestBCSEntities1())

{

Product product = (from p in ctx.Product

where p.Name == name

select p).First();

productId = product.ProductId;

}

return productId;

}

private static void Update()

{

int id = GetId(“Test”);

using (var ctx = new TestBCSEntities1())

{

// We’ll do something similar later to implement the Finder and SpecificFinder BCS stereotypes.

Product product = (from p in ctx.Product

where p.ProductId == id

select p).First();

Console.WriteLine(“id {0} name {1}”, product.ProductId, product.Name);

product.Name = “UpdatedName”;

ctx.SaveChanges();

}

}

private static void Delete()

{

int id = GetId(“UpdatedName”);

using (var ctx = new TestBCSEntities1())

{

// We’ll do something similar later to implement the Finder and SpecificFinder BCS stereotypes.

Product product = (from p in ctx.Product

where p.ProductId == id

select p).First();

ctx.Product.DeleteObject(product);

ctx.SaveChanges();

}

}

}

}

By now, you know the drill. Once we have reached the end of a significant section, it’s time for a small reward. Leave a comment if you have played “Aztec Challenge”:

building The WCF 4 wrapper service

Using Entity Framework 4 is a great thing, but it does complicate things a little. Now, we need a wrapper service to consume our .NET 4 made DLL. It would be folly to create an .asmx service for it, so of course we’ll build a WCF 4 service around it. If you ever tried it, you know that creating a .NET 4 application under a SharePoint site aint no picnic, so we’ll be creating a separate web site for it later.

Please note: We’re skipping the business layer altogether, just because we can and this is only an example.

Let’s start creating a WCF service.

- In VS.NET, right-click the solution and choose Add > New Web Site.

- Accept all defaults, and call it ProductServices.

- Click OK.

- Add a project reference to Product.Data.

- Add a reference to System.Data.Entity.

- Expand the App_Code folder and delete IService1.cs and Service1.cs.

- Add a new class to the App_Code folder called IProductService.cs (the actual im.

- Add a new class to the App_Code folder called ProductService.cs.

- Add a new class to the App_Code folder called SingleProduct.cs.

- Add a new class to the App_Code folder called AllProductsResponse.cs.

- Add a new class to the App_Code folder called ProductRequest.cs.

- Open Service.svc and change the code behind to ProductService.cs, so that it looks like:

<%@ ServiceHost Language=”C#” Debug=”true” Service=”ProductServiceLibrary.ProductService” CodeBehind=”~/App_Code/ProductService.cs” %>

Just above the closing </configuration> element of the web.config file, add the following connection section:

<connectionStrings>

<add name=”TestBCSEntities” connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

<add name=”TestBCSEntities1″ connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

</connectionStrings>

Let’s discuss the actual class implementations one by one. First of all, we’ll need a type that can represent a single product, so we can use it as the return type for our SpecificFinder method:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Runtime.Serialization;

namespace ProductServiceLibrary

{

[DataContract]

public class SingleProduct

{

[DataMember]

public int ProductID { get; set; }

[DataMember]

public string Name { get; set; }

}

}

Our Finder method needs to return a list of all products, so we’ll be needing a type for that too. Note that later on we’ll be creating an ECT in SPD communicating with our WCF service, this compels us to keep things simple. This means you can’t use more than 1 level of nested types, because SPD won’t understand it.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Runtime.Serialization;

namespace ProductServiceLibrary

{

[DataContract]

public class AllProductsResponse

{

[DataMember]

public List<SingleProduct> Products { get; set; }

}

}

For update scenarios, we’ll be passing in a single parameter representing a single product:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Runtime.Serialization;

namespace ProductServiceLibrary

{

[DataContract]

public class ProductRequest

{

[DataMember(IsRequired = false)]

public int ProductID { get; set; }

[DataMember]

public string Name { get; set; }

}

}

The interface for our WCF service must contain operations for the basic 5 BCS stereotypes:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.ServiceModel;

namespace ProductServiceLibrary

{

[ServiceContract]

public interface IProductService

{

[OperationContract]

List<SingleProduct> FindAllProducts();

[OperationContract]

SingleProduct FindSpecificProduct(int productID);

[OperationContract]

int CreateProduct(ProductRequest request);

[OperationContract]

void UpdateProduct(ProductRequest request);

[OperationContract]

void DeleteProduct(int productId);

}

}

The actual implementation of our WCF service is leveraging our Entity Framework model that we’ve created in an earlier section and looks like this:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using LoisAndClark.Product.Data;

namespace ProductServiceLibrary

{

public class ProductService : IProductService

{

public List<SingleProduct> FindAllProducts()

{

var response = new List<SingleProduct>();

using (var ctx = new TestBCSEntities1())

{

var products = from p in ctx.Product

select p;

foreach (var product in products)

{

response.Add(

new SingleProduct()

{

ProductID = product.ProductId,

Name = product.Name

});

}

}

return response;

}

public SingleProduct FindSpecificProduct(int productID)

{

var response = new SingleProduct();

using (var ctx = new TestBCSEntities1())

{

var products = from p in ctx.Product

where p.ProductId == productID

select p;

if (products.Count() == 1)

{

return new SingleProduct()

{

ProductID = products.First().ProductId,

Name = products.First().Name

};

}

else

{

return null;

}

}

}

public int CreateProduct(ProductRequest request)

{

int newId;

using (var ctx = new TestBCSEntities1())

{

var newProduct = new Product() { Name = request.Name };

ctx.Product.AddObject(newProduct);

ctx.SaveChanges();

newId = newProduct.ProductId;

}

return newId;

}

public void UpdateProduct(ProductRequest request)

{

using (var ctx = new TestBCSEntities1())

{

Product product = (from p in ctx.Product

where p.ProductId == request.ProductID

select p).First();

product.Name = request.Name;

ctx.SaveChanges();

}

}

public void DeleteProduct(int productId)

{

using (var ctx = new TestBCSEntities1())

{

Product product = (from p in ctx.Product

where p.ProductId == productId

select p).First();

ctx.Product.DeleteObject(product);

ctx.SaveChanges();

}

}

}

}

If everything compiles, you should be good to go.

- You can test it by setting ProductServices as the startup project.

- Click CTRL+F5 (Start without debugging).

- Now add a service reference in the ProductTester project to this service.

- Set ProductTester as the startup project and add the following test code:

Please note: Rename ServiceReference2 to whatever name you’re using.

ServiceReference2.IProductService service = new ServiceReference2.ProductServiceClient();

int newID = service.CreateProduct(new ServiceReference2.ProductRequest() { Name = “ABNA” });

ServiceReference2.SingleProduct product = service.FindSpecificProduct(newID);

ServiceReference2.ProductRequest request = new ServiceReference2.ProductRequest() { ProductID = product.ProductID, Name = “New name” };

service.UpdateProduct(request);

service.DeleteProduct(newID);

List<ServiceReference2.SingleProduct> products = service.FindAllProducts().ToList<ServiceReference2.SingleProduct>();

foreach (var prod in products)

{

Console.WriteLine(“id: {0} name: {1}”, prod.ProductID, prod.Name);

}

Now add a breakpoint to the first line of the Main method of ProductTester, press F5, step thru it and see if everything works.

Since we’ve created a WCF 4 service, we won’t go thru the hassle and try to get everything to work in a separate application under a SharePoint site. Instead, we’ll just create a new web site for it.

- Open IIS Manager.

- Right-click the Application Pools node and choose Add Application Pool.

- Call it ServicesPool.

- Select the .NET Framework 4 version.

- Click OK.

- Select ServicesPool, then click Advanced Settings.

- Locate the Identity property and set it to a custom account which has access to the TestBCS database. This is important, otherwise the WCF service won’t work.

- Click OK.

- Stop and start the application pool.

- Right-click the Sites node and choose Add Web Site.

- Name it ServicesSite.

- Select the ServicesPool App pool.

- Specify the following Physical path: C:\inetpub\wwwroot\ServicesSite.

- Choose a free port number. We’ve chosen 8080.

- Click OK.

- Right-click ServicesSite and choose Add Application.

- Call the Alias ProductServices.

- Set the Physical path to C:\inetpub\wwwroot\ServicesSite.

- Click OK.

- Copy the entire contents of the ProductServices folder to C:\inetpub\wwwroot\ServicesSite.

- Adjust the web.config so that it contains the service info (the <system.serviceModel> part) from the app.config file of your service, like so:

<?xml version=”1.0″?>

<configuration>

<system.web>

<compilation debug=”true” targetFramework=”4.0″>

<assemblies>

<add assembly=”System.Data.Entity, Version=4.0.0.0, Culture=neutral, PublicKeyToken=B77A5C561934E089″/>

</assemblies>

</compilation>

</system.web>

<system.serviceModel>

<services>

<service name=”ProductServiceLibrary.ProductService”>

<host>

<baseAddresses>

<add baseAddress = “http://localhost:8080/ProductService/” />

</baseAddresses>

</host>

<!– Service Endpoints –>

<!– Unless fully qualified, address is relative to base address supplied above –>

<endpoint address =”” binding=”wsHttpBinding” contract=”ProductServiceLibrary.IProductService”>

<!–

Upon deployment, the following identity element should be removed or replaced to reflect the

identity under which the deployed service runs. If removed, WCF will infer an appropriate identity

automatically.

–>

<identity>

<dns value=”localhost”/>

</identity>

</endpoint>

<!– Metadata Endpoints –>

<!– The Metadata Exchange endpoint is used by the service to describe itself to clients. –>

<!– This endpoint does not use a secure binding and should be secured or removed before deployment –>

<endpoint address=”mex” binding=”mexHttpBinding” contract=”IMetadataExchange”/>

</service>

</services>

<behaviors>

<serviceBehaviors>

<behavior>

<!– To avoid disclosing metadata information,

set the value below to false and remove the metadata endpoint above before deployment –>

<serviceMetadata httpGetEnabled=”True”/>

<!– To receive exception details in faults for debugging purposes,

set the value below to true. Set to false before deployment

to avoid disclosing exception information –>

<serviceDebug includeExceptionDetailInFaults=”True” />

</behavior>

</serviceBehaviors>

</behaviors>

</system.serviceModel>

<system.webServer>

<modules runAllManagedModulesForAllRequests=”true”/>

</system.webServer>

<connectionStrings>

<add name=”TestBCSEntities” connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

<add name=”TestBCSEntities1″ connectionString=”

metadata=res://*/Model.csdl|res://*/Model.ssdl|res://*/Model.msl;

provider=System.Data.SqlClient;provider connection string="data source=ASTRO\CTP0;initial catalog=TestBCS;integrated security=True;multipleactiveresultsets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />

</connectionStrings>

</configuration>

You have arrived at a point where you have a working WCF service supporting the basic BCS stereotypes. Let’s test it by opening the ProductTester app and adding a service reference to: http://localhost:8080/ProductServices/service.svc. If your WCF service works, good things are about to happen.

It’s that time again, the end of a significant section. Btw, we still can’t be beat at “Way of the Exploding Fist”:

Full-fledged single table

We can use SPD to create an ECT that consumes our WCF service by pointing it at the MEX endpoint, which can be found at:

http://[server:port]/ProductServices/Service.svc/mex

In our case, the location is:

http://astro.loisandclark.internal:8080/ProductServices/Service.svc/mex

Please note: It’s important that you don’t use http://localhost anymore, because SPD won’t accept it.

Let’s walk thru the steps of creating the new ECT:

- Open SPD again for the site where you want to add the new ECT.

- Click External Content Types.

- In the ribbon, click External Content Type.

- In the External Content Type Operations section, click Click here to discover external data sources and define operations.

- Click Add Connection.

- In the External Data Source Type Selection dialog, choose WCF Service and click OK. The WCF Connection dialog opens.

- Enter the following Service Metadata URL: http://astro.loisandclark.internal:8080/ProductServices/Service.svc/mex.

- Make sure the Metadata Connection Mode is set to Metadata Exchange.

- Set the Service EndPoint URL to http://astro.loisandclark.internal:8080/ProductServices/Service.svc.

- Choose the following name: ProductServiceConnection.

- Click OK.

Finish the creation of operations for the new connection. We’ll start with the FindAll stereotype:

- In the Data Source Explorer, expand ProductServiceConnection.

- Expand the Web Methods node.

- Right-click FindAllProducts and choose New Read List Operation.

- Click Next twice.

- When you arrive at the Return Parameter Configuration page, you’ll see an error stating that you should pick an identifier.

- Select ProductID and check the Map to Identifier checkbox.

- The error disappears, click Finish.

Now, let’s work on the ReadSpecific stereotype:

- Right-click FindSpecificProduct and choose New Read Item Operation.

- Click Next.

- On the Input Parameters Configuration page, select productID and check the Map to Identifier checkbox.

- Click Next.

- On the Return Parameter page, select ProductID and click the MapToIdentifier checkbox.

- Click Finish.

We have support for the most basic operations. At this point, you will be able to create a read-only version of an external list based on the new ECT communicating with our WCF service. Since we’re going for the full-fledged implementation, we’ll be implementing the other stereotypes as well, starting with Create:

- Right-click CreateProduct and choose New Create Operation.

- Click Next.

- On the Input Parameters page, select ProductID and click the MapToIdentifier checkbox.

- Click Next and Finish.

Let’s move on to Delete:

- Right-click DeleteProduct and choose New Delete Operation.

- Click Next.

- On the Input Parameters page, select productId and click the MapToIdentifier checkbox.

- Click Finish.

Finally, finish up Update:

- Right-click UpdateProduct and choose New Update Operation.

- Click Next.

- On the Input Parameters page, select ProductID and click the MapToIdentifier checkbox.

- Click Finish.

You have defined all basic operations for an ECT, let’s finish the last details:

- On the breadcrumb menu, click New external content type.

- Choose the following name: ProductServiceECT and choose the same Display Name.

- Save it by pressing CTRL+S.

- Go on creating an external list for this ECT.

- Click Lists and Libraries.

- In the ribbon, click External List.

- Choose ProductServiceECT and click OK.

- Call the external list MyProductServiceList and click OK.

You’re almost ready to use the list, except that you need to set some permissions.

- Open SCA.

- Click Application Management.

- Click Manage service applications.

- Click Business Data Connectivity Service.

- From the ECB menu of ProductServiceECT, select Set Permissions.

- Give the desired users all permissions available and click OK.

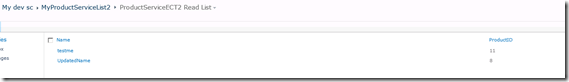

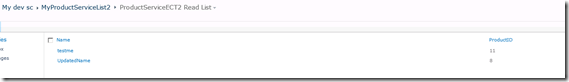

Open the browser and check out the external list (see Figure). Play around with it a bit to ensure that all operations work.

Time for a little treat. Truthfully, this is the funniest multiplayer game ever built, “Spy vs Spy”:

Deployment

Deploying a .NET assembly connector is easy, because a solution ( a .wsp file) is created automatically by VS. Deploying a solution created by SPD such as the one described in the previous section is not truly difficult, but a bit more esoteric. What you need to do is find a way to export the corresponding BDCD model and associated external lists, modify connection info, and install it on another environment using a feature.

Honestly, this is one of these moments were we feel closer to being sorcerers than being software developers. If you want to include external list instances too, you need to export the entire site, locate the artifacts you need, and make a SharePoint feature for them that you can deploy. Please follow along this walkthrough of the SPD solution incantation:

- Browse to the site containing the ECT you want to deploy.

- Go to Site Actions > Site Settings.

- In the Site Actions section, click the Save site as template link.

- Choose the following file name: MySiteTemplate.

- Choose the same Template name.

- Click OK. This creates a template in the Solutions Gallery.

- Click the go to the solution gallery link.

- Right-click MySiteTemplate and choose Save target as.

- Save MySiteTemplate.wsp in c:\temp.

- Start VS.NET 2010.

- Choose File > New > Project > SharePoint > Import SharePoint Solution Package.

- Click OK.

- Select a SharePoint site used for debugging. We’ll use http://astro/.

- Select Deploy as a farm solution.

- Click Next.

- Browse to the path for the existing solution package (MySiteTemplate.wsp).

- Click Next.

- Select the first item from the list, then press CTRL+SHIFT+END. This selects all items in the package.

- Click a checkbox. This causes all items to be unchecked.

- Locate the Lists you want to take with you (they have the type List Instance) and check them.

- Click Finish.

- If you get a warning that the list instances you’ve selected depends on Modules click Yes to include all required dependencies.

- Click OK once the solution import completed succesfully.

- Switch to SPD.

- Click External Content Types.

- Right-click ProductServiceECT and choose Export BDC Model.

- Choose the BDC Model Name: ProductServiceModel.

- Click OK.

- Save ProductServiceModel.bdcm in c:\temp.

- Go back to VS.

- Right-click the project and choose Add > Existing Item, then browse to c:\temp and import ProductServiceModel.bdcm.

- If you’re asled to add a .NET assembly LobSystem choose No.

- Click OK.

A feature is created automatically for the BDCM file and you’re ready to deploy your BCS solution. This also means that it’s reward time again, how about taking a look at a true classic: “Outrun”?

Standard Search support

The reason why you’d wanna be building BCS solutions lies in the fact that it integrates so well with SharePoint, something you can’t achieve by just building ASP.NET solutions. We’ve already seen external lists, now we’re gonna take a look at SharePoint search support.

You will find that having basic search support is relatively easy. That is, if you’re a SharePoint magician or have mental problems (as the Mongolian proverb says: “A detour of 10 miles is not much, if you’re a mad dog”).

To begin with, we will take some actions to make sure that it’s possible to click on search results and look at the details. Clicking a search result leads to the display of the ECT profile page, and we didn’t create one – yet. Profile pages are special web part pages that display info about external items. Here’s how you go about creating one:

- First set an ECT profile Page Host site by opening SCA.

- Click Application Management.

- Click Manage Service applications.

- Click Business Data Connectivity Service.

- In the ribbon, click Configure.

- Check Enable Profile Page Creation and define the site where profile pages will be created (in a special doc lib called _bdc). We’ve chosen http://astro as our host site.

Click OK.

Now, create a profile page for our ECT.

- Open SPD.

- Click External Content Types.

- Click ProductServiceECT.

- In the ribbon, click Create Profile Page.

This causes the creation of a profile page, which shows search result details.

For every ECT that supports basic search, you always need to implement a Finder and a SpecificFinder method and make some modifications to the BDCM model to support indexing (full crawl). We needed to provide those methods anyway, so as far as that’s concerned we’re good to go. If you want to support incremental crawls, you have to do more work (such as providing a last modified datetime), but in this article we’re not gonna go there.

The next procedure shows how to add search support for our custom BCS ECT:

Let’s start exporting our BCD model so we can modify it:

- Go to SPD.

- Click External Content Types.

- Right-click ProductServiceECT > Export BDC Model.

- Choose the following BDC Model Name: ProductServiceBDCM.

- Click OK.

- Save it in c:\temp.

To support indexing of an external system, you need to understand some parts of the bdc model and make some minor changes too. We’ll get IntelliSense support by copying the schema of the BDC model in the same folder as the model itself, and then edit it using VS.NET.

- Go to SPD.

- Click External Content Types.

- Right-click ProductServiceECT > Export BDC Model.

- Choose the following BDC Model Name: ProductServiceBDCM.

- Click OK.

- Save it in c:\temp.

- Rename ProductServiceBDCM.bdcbm to ProductServiceBDCM.xml.

- Go to C:\Program Files\Common Files\Microsoft Shared\Web Server Extensions\14

- TEMPLATE\XML and copy BDCMetadata.xsd to c:\temp.

- Right-click ProductServiceBDCM.xml and choose Open with > Microsoft Visual Studio 2010.

- Notice that the FindAllProducts method has a property called RootFinder. This method is called by the crawler during the indexing of product information. It’s allowed to give it any value you want (such as ‘x’) or no value at all, the property just needs to be present.

- Locate the element <LobSystem Type=”Wcf” Name=”ProductServiceConnection”>.

In it’s <Properties> section, add the following property (which also doesn’t require a value):

<Property Name=”ShowInSearchUI” Type=”System.String”></Property>

- Save the model.

- Rename it back to ProductServiceBDCM.bdcm.

- Open SCA.

- Click Application Management > Manage service applications > Business Data Connectivity Service.

- Delete the existing ProductServiceBDCM model.

- In the ribbon, click Import.

- Browse to ProductServiceBDCM.bdcm.

- Click Import. It will take some time before the BDCM file is validated.

- When finished, click OK.

- In the ECB menu of ProductServiceBDCM, choose Set Permissions and set user permissions for your test user.

Now, let’s index our Products data.

- Open SCA.

- Click Application Management.

- Click Manage service applications.

- Click Search Service Application.

- Click Content Sources.

- Click New Content Source.

- Call it ProductSource.

- n the COntent Source Type section, select Line of Business Data.

- In the External Data Source section, select Crawl selected external data source.

- Check ProductServiceConnection.

- In the Start Full Crawl section, check Start full crawl of this content source.

- Click OK.

On the Manage Content Sources pages, you will see that the crawling process is starting. Refresh the page to see what the status is, but remember that this can take a while. In a test we only had 2 entries in our products database, but the indexing process still took 4 minutes and 20 seconds.

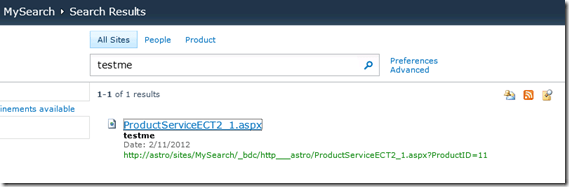

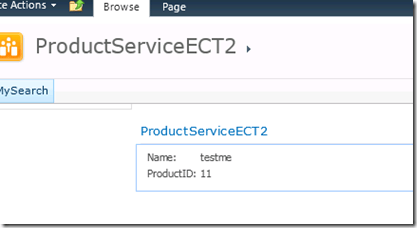

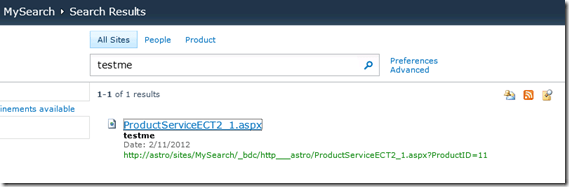

Eventually, the crawling process will end. Click Crawl Log to see how it went. You can click on the numbers to see more specifics about successes, failures and so on. After that, you can go to your Search Center (if you haven’t got one, create a new site collection using the Search Center template). Search for a product name (you have to type in the entire name) and you will get the appropriate search results:

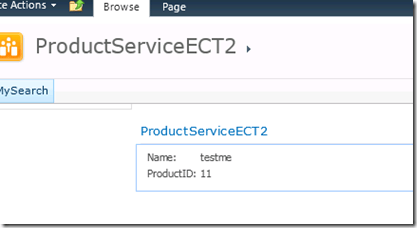

Here is the details view:

Please note: If the URL starts with bdc3, you haven’t correctly created a profile page, or created it after creating the content source and indexing it.

As a well-deserved reward, let’s take a look at the artworks of a game with a great gameplay: Fast Eddie. Not only that, it’s name always reminds us of an old friend: Eddy Anthonio, the former European karate champ. He was aptly called Fast Eddy too.

ECT Associations

As of yet, we haven’t discussed what we think is a truly awesome feature of BCS: the ability to define relationships between ECTs, also called ECT Associations. This means you now have the possibility to define a relationship between tables located in different databases, or between a web service and a database table. How cool is that?

BCS supports the following associations:

- One-to-many

- Many-to-many (not available in SPD)

- Self-referential (returns the same ECT)

- Reverse (returns parent entity)

In this section, we will explore a one-to-many relationship. To explain it, we’ll have to create a new database table called Product_part, that contains multiple product parts that all refer to a specific product. We won’t bother creating a FK relation for this table, since we’ll be creating another one, a BCS relation, pretty soon.

Here’s the script for creating the Product_Part table:

USE [TestBCS]

GO

/****** Object: Table [dbo].[Product_Part] Script Date: 2/14/2012 7:00:41 AM ******/

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

CREATE TABLE [dbo].[Product_Part](

[ProductPartID] [int] IDENTITY(1,1) NOT NULL,

[ProductID] [int] NOT NULL,

[Name] [nchar](55) NOT NULL,

CONSTRAINT [PK_Product_Part] PRIMARY KEY CLUSTERED

(

[ProductPartID] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

We’ll move on and create a SQL Server ECT pointing to this table. This is convenient, because this is the last type of ECT we didn’t show yet in this article. In addition to the normal operations we’ve defined up until now, we’ll also be creating an association pointing to the ProductServiceECT. In other words, we’ll be defining a relationship between a database table and a web service.

- Open SPD.

- Click External Content Types.

- In the ribbon, click External Content Type.

- Call it ProductPartECT.

- Click the link Click here to discover external data sources and define operations.

- Click Add Connection.

- Select the SQL Server Data Source Type and click OK.

- Connect to the TestBCS database.

- Expand the TestBCS node in the Data Source Explorer.

- Expand the tables node.

- Right-click it and choose Create All Operations.

- On the Operation Properties dialog, click Next twice.

- Click Finish.

- Right-click Product_Part and choose New Association.

- Click Browse.

- Select ProductServiceECT.

- Click OK.

- Click Next.

- In the Data Source Elements pane, select ProductID.

- Check the Map to Identifier checkbox.

- Click Next twice.

- Click Finish.

- Save the ECT.

We have created the new ECT, let’s move on and assign the appropriate permissions so you’ll be able to access the ECT:

- Go to SCA > Application Management > Manage service applications.

- Click Business Data Connectivity Service.

- Select the ProductPartECT ECT and Set Permissions.

Now, you should be able to access the ECT. We’’ll create an external list based on this ECT:

- Switch back to SPD.

- Click Lists and Libraries.

- Click External List.

- Select ProductPartECT and click OK.

- Enter the name: ProductPartList.

- Click OK.

Please note: Be aware that sometimes it takes a couple of minutes before changes are synchronized. Don’t worry too much if you’re getting an access denied error.

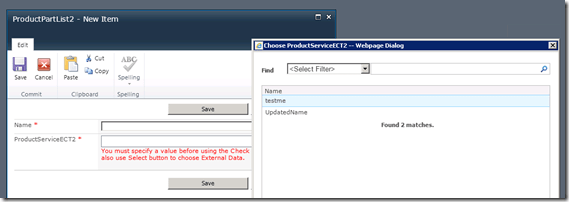

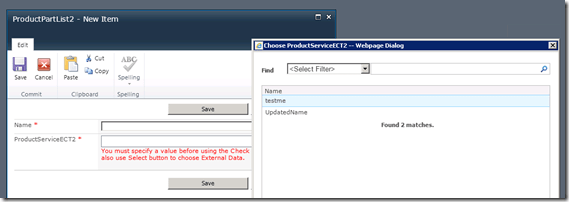

You can check that the association is valid by adding a new item to the ProductPartList. You’ll get to open the external item picker and choose a product to which the product part belongs.

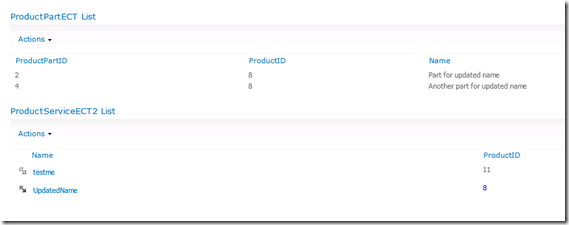

To better demonstrate the effects of an ECT association, we’ll use the Business Data List and Business Data Related List web parts, and connect them to each other to leverage the ECT association.

- Go to a web part page and choose Site Actions > Edit Page.

- Click Add a Web Part.

- Select the Business Data category.

- lick Business Data List and choose Add.

- Click Add a Web Part.

- Select Business Data Related List.

- Click Add.

- On the Business Data List web part, click Open the tool pane.

- Choose the following type: ProductServiceECT.

- Click OK twice.

- On the Business Data Related List, choose Open the tool pane.

- Choose the type: ProductPartECT.

- Click OK twice.

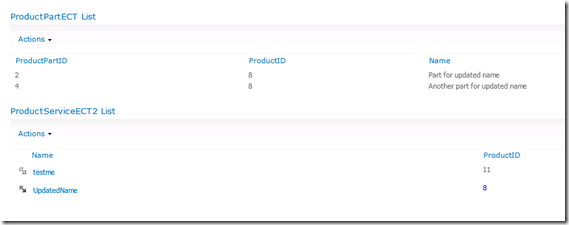

- On the ProductPartECT List web part, choose Connections > Get Related Item From > ProductServiceECT2 List.

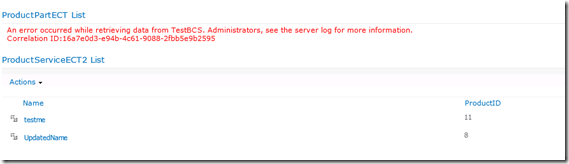

If you click an item in the ProductServiceECT2 List web part, you’ll notice the ProductPartECT List web part updates too.

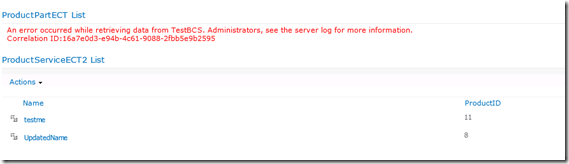

If you want, you can throw away the ECT association and observe what happens (it may be some moments before changes are sync’ed):

So, that’s that. Let’s move on to things that are really important: the Commodore 64 classic Beach-Head:

Secure Store

Since there’s nothing to fix, we first need to break something (and then fix it again). Up until now we’ve taken the shortcut when it comes to authenticating. On our dev machine, it’s fine authenticating using the current user which happens to be the administrator who happens to have access to the TestBCS database. It’s unthinkable (sorry, we couldn’t resist picturing what unthinkable looks like, if you were wondering what Samuel L. Jackson was doing in our article) that every user gets to have direct access to the database, not to mention the double hop issue. Btw, this is exactly what we are going to do: we won’t be mentioning the double hop issue again, if you want to learn more about that you’re on your own.

What you should do is leverage the Secure Store Service (SSS) and map the credentials of end users to one or more accounts that do have access to the TestBCS database. In this section, we’ll map our windows account to a SQL Server account (using another Windows account is fine too) that has access to the database. We’ve created a SQL Server user called testbcsuser. We won’t be describing how to add this user and give it appropriate permissions, because we’re getting near the end of the article and are getting tired.

Next, you need to create a new SSS target application that allows you to map client credentials to TestBCS database user credentials.

- Go to SCA > Application Management > Manage service applications > Secure Store Service.

- If you didn’t do it before, click the Generate New Key button in the ribbon.

- Click New to create a new target application.

- Choose as Target Application ID and Display Name: TestBCS.

- Enter an e-mail address.

- Click Next.

- Add two fields.

- Call the first field SQL User, Field Type User Name, unmasked.

- Call the second one SQL Password, Field Type Password, Masked.

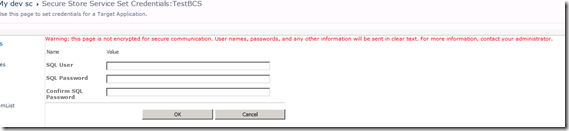

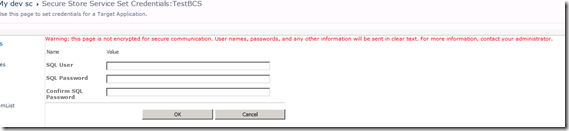

- Delete both Windows User Name and Windows Password entries. The page should look like the figure shown below.

- Click Next.

- Add your account as the Target Application Administrator.

- Click OK.

You’ve created a new SSS target application. Now, you have to configure the ECTs that use TestBCS database so that they’ll be using SSS to connect to the database. In the next procedure, we’ll show how to do this for the ProductPartECT.

- Switch to SPD.

- Click External Content Types.

- Click ProductPartECT.

- Click TestBCS (located after External System).

- Choose the following Authentication Mode: Impersonate Custom Identity.

- Enter the following Secure Store Application ID: TestBCS.

- Click OK.

- If you get a dialog box informing you that this decision affects ECTs, choose Yes.

Let’s see what impact this has made. If you go to external lists based on the ProductPartECT, you will be asked to provide SQL credentials to access the TestBCS database. After you have provided these once, you won’t have to do it again. It’s also possible that the administrator provides the credentials for you, but we’ll talk about that later.

- Switch to the browser.

- Go to the ProductPartList.

- Click the new link Click here to authenticate.

- In our case, a warning appears stating there our web site doesn’t have a security certificate. This is ok, because it’s a dev machine.

- Click Continue to this Site.

- The default SSS set credentials page appears and asks you for a SQL User and SQL Password (as shown in the next Figure). Enter these and click OK.

From now on, the BCS infrastructure then uses these credentials to login to the TestBCS database as long as BCS succeeds in logging in using these credentials. If you, as the administrator of your farm, want to influence these settings, you can go back to SCA and find the Target Application TestBCS on the SSS page.

- Check the TestBCS Target Application ID checkbox.

- In the ribbon, in the Credentials section, click the Set button.

You can enter a user name in the Credential Owner textbox to specify the credentials for a specific user. If you select a user and leave the credentials emppty (and click OK), you’re effectively resetting the user’s settings for the ECTs using this SSS target application. As a result, the next time the end user goes to the ProductPartList External List, he will be asked to authenticate again.

Let’s show the final reward: Daley Thompson’s Decathlon. If you know the game, you’ll never forget the experience of playing your joystick until your hands are so sore. There has never been a party game like this, nor will there ever be one…

Conclusion

Of course, you have been on a little trip in time and watched at some of the really old (but not oldest) video games. That in itself should have been worth the read, although we feel the other stuff may have been worthy of your time and attention too. BCS is developing into a mature and powerful product and SPS 2010 is the first version where we’re feeling that you can’t afford not to know it.